Building Blocks

The purpose of the BB assessment is to assess the suitability of the BBs identified and catalogued in Task 1.5 for use within the DE4A project. Thus, it bridges outputs from WP1 and requirements from WP4 to provide input for the technical, operational and administrative considerations in the architectural assessments carried out here. Over 40 BBs have been considered for this assessment, 31 of which are assessed at the first phase of this work. The results of the assessment show that there is a mature and acceptable stock of solution building blocks that can be considered as potential candidates for implementation by the pilots, either in their entirety or partially, with the needed upgrades.

Theoretical background

Objectives and scope

To reach the goal outlined above, this section delves into the architectural evaluation of the building blocks catalogued as useful for DE4A. It is important to note that the term “building block” (BB) in the context of this assessment refers to a Solution Building Block in TOGAF sense.

The most important step in assessing the BBs is determining the methodology that would support a common description framework of the BBs, while providing means for determining the quantitative and/or qualitative evaluation criteria of the considered BBs. The outcome of the assessment is a succinct list of recommendations for BB use by the pilots in WP4. In addition to defining the methodology, gap analysis is performed based on both the pilots’ requirements and the common description framework of the BBs, considering the results from the assessment, the project requirements and the common PSA principles. The overall process of conceptual considerations, empirical evaluation, gap analysis and piloting recommendations are denoted as a DE4A generic methodology for architecture building blocks evaluation.

Available methodologies

In order to provide continuity and justification of the methodology that is being developed, we first outline and assess the suitability of the currently available methodologies in view of the implementation context and the objectives of the DE4A project. To that end, both generic EU/EC assessment methodologies and past LSP project-specific methodologies are considered.

Common assessment method for standards and specifications (CAMSS)

CAMSS [12] is part of the ISA² interoperability solutions evaluation toolkit for public administrations, businesses and citizens. It provides a method to assist in the assessment of ICT standards and specifications. The main objective of CAMSS is achieving interoperability and avoiding vendor lock-in. In that sense, CAMSS criteria evaluate (among other things) the openness of standards and specifications. This is done by employing the CAMSS tools and adapting the evaluation according to the needs of an individual Member State.

In the context of DE4A, relying solely on CAMSS does not provide the means for BB suitability and gap analysis in relation to the piloting needs. Moreover, it does not provide any selection criteria or a taxonomy for consistent mapping of the different BBs onto a common comparable framework.

Past project-specific methodologies

eSENS

eSENS has developed its own methodology for BB assessment, which is mainly an adaptation of CAMSS and Asset Description Metadata Schema (ADMS), supported by inputs of the eSENS deliverable D6.1 (see Table 5 in eSENS D3.1). Its objective is to propose a documentation of format and defining criteria for the maturity and sustainability assessment of building blocks. The overall framework consists of three steps: 1) The Consideration step; 2) The Assessment step; and 3) The Recommendation step and produces a list of assessment criteria to be used for BB evaluation. These criteria, however, are very general and not architecture-specific – their applicability is valid and valuable only if used in collaboration with the legal, business, organizational, technical and implementation team.

It is important to note that the assessment methodology employed in eSENS is developed with a different aim from ours – its analysis and recommendations refer to the desired BB qualities that are needed to ensure meeting the maturity levels and the sustainability criteria envisaged by the project. Thus, although it produces guidelines for assessment, it does not provide concrete output in terms of actual scores, analysis and recommendations for BBs. Moreover, it does not provide a comparable baseline when multiple BBs have to be considered for the same pilot and it is based on the assumption that the existing BBs represent the exhaustive list of possible solutions from which a suitable match should be chosen. In the case of DE4A, such assumption does not hold, as there may be a case where a certain BB is not mature enough to be recommended for piloting but is also not to be completely disregarded either. More importantly, the methodology developed here is used for actual assessment and is to be fine-tuned at a later stage in connection to the general architecture lifecycle development.

TOOP

Like eSENS, the overall idea of the TOOP assessment methodology is to reuse existing frameworks and building blocks provided by CEF, eSENS, and other initiatives. First, an initial inventory of existing e- Government building blocks is proposed. Then, the principles of selection of building blocks for OOP applications are provided, together with high-level views of the architecture. Finally, an analysis of selected building blocks is done with respect to their relevance, applicability, sustainability, need for further development and external interfaces.

The main criteria for inclusion of a building block in TOOP are:

- The specific project requirements;

- The TOOP pilots’ needs;

- Usability in long-term applications (maintenance and support provided).

As a result, the building blocks are categorized into three basic groups:

- BBs that provide capabilities needed by all or most TOOP Pilot Areas;

- BBs that provide capabilities needed or probably needed by some TOOP Pilot Areas;

- BBs that provide capabilities not needed by the TOOP Pilot Areas.

TOOP’s criteria are tightly bound to the piloting needs, whereas the rationale behind their choice is OOP-specific rather than generic. The methodology here follows a similar line of reasoning, but differs in the conceptual framework, which is more formally defined and made reusable by other projects as well.

ISA2 Interim Evaluation

The interim evaluation [13] aimed to assess how well the ISA² Programme has performed since its start in 2016 and whether its existence continues to be justified. Based on stakeholders’ views, opinions and public consultation, it evaluated the implementation of the programme based on seven criteria and identified several points for improvement.

The evaluation criteria considered were: Relevance (the alignment between the objectives of the programme and the current needs and problems experienced by stakeholders); Effectiveness (the extent to which the programme has achieved its objectives); Efficiency (the extent to which the programme’s objectives are achieved at a minimum cost); Coherence (the alignment between the programme and comparable EU initiatives as well as the overall EU policy framework); EU added value (the additional impacts generated by the programme, as opposed to leaving the subject matter in the hands of Member States); Utility (the extent to which the programme meets stakeholders’ needs); and Sustainability (the likelihood that the programme’s results will last beyond its completion).

However, the interim evaluation does not provide a specific methodology – either in terms of criteria choice, or in terms of architectural or future piloting recommendations. Its value lies mainly in the identification of possible gaps that exist within the current EU architecture framework even prior to the implementation of the available building blocks. In that sense, the main recommendations for prospective actions are determined in awareness raising beyond national administrations; moving from user-centric to user-driven solutions; and working towards increased sustainability.

Our work integrates the interim evaluation criteria even at the stage of cataloguing BBs relevant in DE4A context. More importantly, it takes into consideration the methodological gaps identified in the assessment in terms of awareness, user-driven solutions and sustainability prescriptions and integrates specific technical, administrative and operational aspects in the recommendation’s extraction for the pilots.

EAAF

The Enterprise Architecture Assessment Framework (EAAF) [14] assists the US government in the assessment and reporting of their enterprise architecture activity and maturity, as well as in the advancement of the use of enterprise architecture to guide political decisions on IT investments. In addition to providing the methods for the assessment, EAAF also identifies the measurement areas and criteria by which government agencies are to rely on the architecture to drive performance improvements. This is integrated into the so-called Performance Improvement Lifecycle, where points for improvement are identified and translated into specific actions.

In that sense, the framework provides a good overall methodology for the assessment of DE4A BBs. Following its guidelines, in order to perform the technical assessment, the architects, together with the relevant project partners (mainly from WP1 and WP4):

• Identify and prioritize the BBs considering the pilots’ needs and in view of the project goals and objectives;

• Determine specific methodological steps for gap analysis, using common or shared information assets and information technology assets;

• Quantify/qualify and assess the performance to verify compliance with pilots’ requirements and provide report on gap closure; and

• Assess feedback on the pilots’ performance in order to enhance the architecture and fine-tune the assessment methodology for future implementation decisions.

Methodological considerations

The need to develop a generic methodology that integrates some aspects of the standardized methodologies, but does not rely on a single one, is based on several considerations:

- The assessment methodologies currently available either focus on alignment of the BB specifications or are only concerned with the maturity of the solution provided by the building blocks;

- They do not provide a clear definition of the common principles for assessment;

- They do not allow for a phased-approach to the assessment and are applicable either for a single BB or for a finalized solution architecture (Note: Although EAAF prescribes the principles for a phased assessment, it does not delineate the phases explicitly and only gives a requirement for the overall outcome of the assessment).

As a result, the architect is prevented from developing an assessment for multiple BBs with varying levels of complexity and is also disabled to perform comparative evaluation for determining the best fit for a particular solution architecture.

The methodology developed here is novel in that it addresses the points above and is also generic in the sense that it can be reused by other large-scale projects for similar purposes. It incorporates the assessment principles of existing standards-based methodologies (like CAMSS and EAAF) taking into account the architecture feasibility and sustainability, but it also generalizes these principles over the context of implementation required by DE4A.

Methodology

In order to account for both the piloting recommendations criteria and the performance assessment criteria, the overall methodology requires a phased approach. Therefore, it consists of two phases:

I) The first phase takes stock of the entire list of BBs that can have potential use in the project and as part of the piloting. Then, a conceptual and an empirical framework for evaluation is developed – the former enables the gap analysis of the BBs, whereas the latter allows for qualitative and comparative analysis of the BBs, as well as extraction of concrete recommendations for piloting. The first phase essentially corresponds to the first three points of the EAAF.

II) The second phase will account for the complete list of project artefacts and will provide empirical validation for the results and recommendations from the first phase. In addition, reassessment of the previous gaps will be performed. This phase will mainly be realized in close collaboration with the pilots: direct feedback via surveys and questionnaires on BBs’ performance will be obtained and the initial conceptual framework will be fine-tuned accordingly. The second phase corresponds to the last point of the EAAF.

Conceptual framework

In this section, we first catalogue the BBs that are to be considered by the assessment. This step considers the output from D1.5 and establishes a relation to the internal project environment. Then we establish a common conceptualization of the key elements, which is based on the Digital service model, Section 2.2 of the Study on "The feasibility and scenarios for the long-term sustainability of the Large Scale Pilots”. With that, a relation to the external project environment is established. Finally, a basic assessment framework is developed to enable the grading of the BBs from several maturity aspects: technical, administrative and operational. The output of the assessment will allow us to perform gap analysis and will also guide the extraction of the piloting recommendations.

The Digital Services Model: A five-layered approach

The LSPs so far have developed building blocks that enable cross-border interoperability based on standards, specifications and common code/components. Therefore, moving beyond the pilot projects and towards actual deployment, it is crucial to develop a structure in which the digital services and the elements they are composed of can be conceptualized.

To establish a conceptual model, it is important to clearly set out the key terminology that is used in relation to the DSI for the provision of cross-border public services. CEF provides an overarching framework suitable for this purpose, called Digital Services Model (DSM). It takes into account the deliverables of the LSPs, the stakeholders and roles they can take on, and the drivers behind the dynamics of this complex ecosystem.

The Digital Services Model is not only needed to establish common terminology and framework, but it is also necessary to analyse the needs and requirements for the future deployment of any digital services, enabling a continuity of the developed methodologies with the LSPs. Thus, it presents the different levels of granularity which need to be taken into consideration for the overall management of the DSI for the provision of public Services.

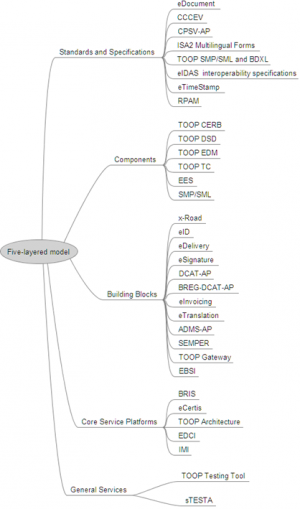

The following elements represent the main part of the DSM taxonomy:

- Standards and Specifications;

- Common code or Components;

- Building Blocks;

- Core Service Platforms;

- Generic Services.

Standards and Specifications have been used by all the LSPs for the development of the digital services. These standards and specifications play a central role in interoperability as it means that systems have commonalities in key areas, enabling systems to communicate with one another.

Components are the common code that has been developed for the building blocks. Building blocks are made up of several components (e.g. a timestamp component/functionality). These are often referred to as modules in the deliverables of the LSPs. Component can either be BB-specific or used in several BBs.

Essentially, all the solutions derived from the LSPs are ultimately building blocks in the sense that they are services that can be integrated as part of other services. Given the fact that these building blocks have the most obvious potential for reuse across different domains (or Core Service Platforms) these can be seen as a specific layer as part of the set of digital services.

Core Service Platforms enable the provision of cross-border digital services in different domains, like eHealth, eJustice and eProcurement. These are the platforms where all the different BBs for a specific service (e.g. eHealth services or eID services) are brought together and made available, enabling service providers to take up and reuse the services as part of their own services. The Core Service Platform (CSP) level should eventually enable the Member States to interact with other Member States through the use of building blocks (via the Generic Services).

It is important to determine what building blocks have been developed by an LSP, as well as which of these are CSP-specific and which are reusable. The CSP-specific blocks are called domain blocks (e.g. ePrescription is specific to eHealth) and the reusable blocks are called building blocks (e.g. eID can be reused in various domains).

The reusable building blocks are the strongest common element between the various CSPs. They therefore need to meet the needs and requirements of all the CSPs. This underlines the links between the building blocks and the CSPs, and the need to manage both of these simultaneously.

Generic Services is the level of abstraction at which the Member States integrate or connect to the CSPs. These interconnections are necessary to link up a Member State so it can provide cross-border access and use of national eIDs, electronic health records, national procurement platforms, national eJustice platforms and public services for foreign business. Each Member State has to ensure that these existing systems at national level are linked up with the CSPs through Generic Services in order to be cross-border enabled.

To define a common taxonomy for BB description prior to the actual assessment, the relevant BBs are catalogued in view of the five-layered model described above. This is represented in Figure 42.